Natcap vs. ENCORE: How Do They Compare?

What is ENCORE? ENCORE (Exploring Natural Capital Opportunities, Risks and Exposure) is a classification system that rates business activities on...

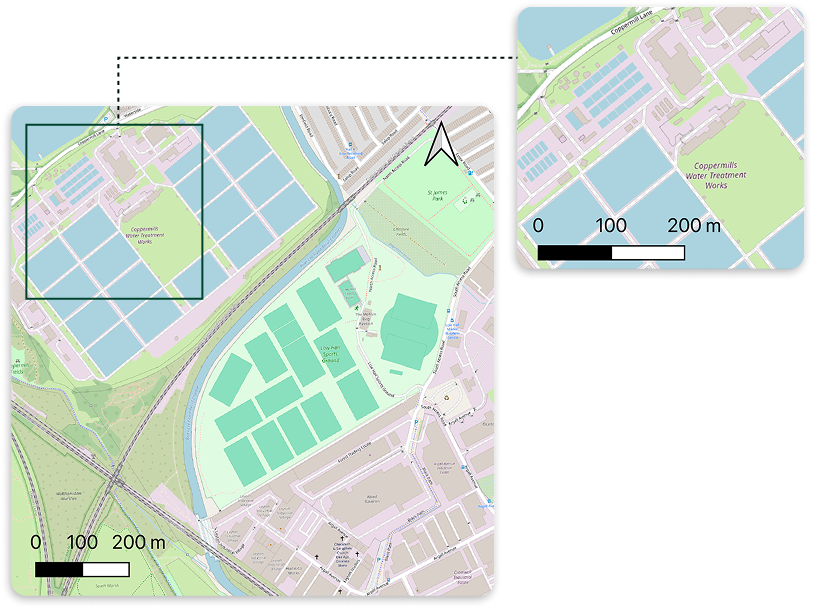

Nature doesn’t change in neat administrative units. It changes field by field, river reach by river reach, and sometimes metre by metre. That’s why spatial granularity—how “fine” or “coarse” your spatial data is—often determines whether nature and biodiversity insights are decision-ready, or merely descriptive.

Spatial granularity describes the size of the spatial unit used to represent reality. It determines how accurately they represent, analyse, and map features. In practice, it is usually one of two things:

What this looks like in the real world:

A useful way to think about spatial granularity is: what is the smallest change you need to detect to make a better decision? That “smallest meaningful unit” varies by use case. But the principle stays consistent: coarse data tends to average away local variation and differences, while finer data can reveal them—if you handle dataset quality and uncertainty properly.1

Nature- and biodiversity-related decision making is increasingly spatial by necessity, because risks and impacts depend on where an activity occurs—not just what sector it sits in. All key regulation and assessment frameworks such as TNFD, SBTN or the EU CSRD ESRS E4, highlight the importance of “placed-based” analysis.

This is particularly important because the main drivers of nature loss—land/sea-use change, direct exploitation, climate change, pollution, and invasive alien species—are unevenly distributed and concentrate in specific places.

Here are a few concrete examples of how spatial data supports better nature decisions:

Over the last two decades, spatial granularity in land-cover monitoring has improved through three reinforcing shifts:

Spatial granularity changes what you can see, what you can prove, and where you take action.

Consider a very practical example: deforestation risk near farms.

This materially changes decisions such as:

The same logic applies to biodiversity hotspots and corridors.

Example from Natcap platform 10 m vs 100 m granularity at a site covering >500,000ha

One important caveat: higher resolution isn’t automatically “better”.

At Natcap, the goal is not “more data”. It delivers decision-ready nature intelligence by translating spatial science into metrics organisations use for governance, risk management, procurement, and reporting.

A key part of that is choosing datasets with the right spatial granularity for the decision at hand. Natcap leverages more than 40 different geospatial datasets, with global coverage, to inform the state of nature at the best granularity level, across detailed categories such as soil quality, land cover, water availability, pollution levels.

Granularity is not a nice-to-have in this context. It is the difference between:

Although some gaps still exist, spatial data is constantly improving. Natcap continuously tracks advances in satellites, land-cover products, and analytical methods so that the datasets behind your nature metrics keep pace with the decisions (and scrutiny) they need to support.

Ready to move from broad screening to location-specific action? See how Natcap’s nature intelligence platform uses high-resolution spatial data to help teams prioritise sites, assess risk, and make more defensible nature-related decisions.

-

Sources

What is ENCORE? ENCORE (Exploring Natural Capital Opportunities, Risks and Exposure) is a classification system that rates business activities on...

The climate crisis has long been a corporate priority. But the nature agenda, biodiversity, land use, pollution, and water, is now emerging as an...

In early 2025, we spoke with 13 sustainability leaders across sectors to understand how organisations are engaging with nature. These conversations...